AI Agent Automation LLM: Complete Integration Guide 2026

Understanding ai agent automation llm integration transforms how businesses deploy intelligent automation in 2026. As large language models evolve beyond simple chatbots into autonomous agents capable of complex workflows, mastering ai agent automation llm becomes essential for competitive advantage. This comprehensive guide explores implementation strategies, architecture patterns, and real-world applications of ai agent automation llm systems.

What Is AI Agent Automation LLM Integration?

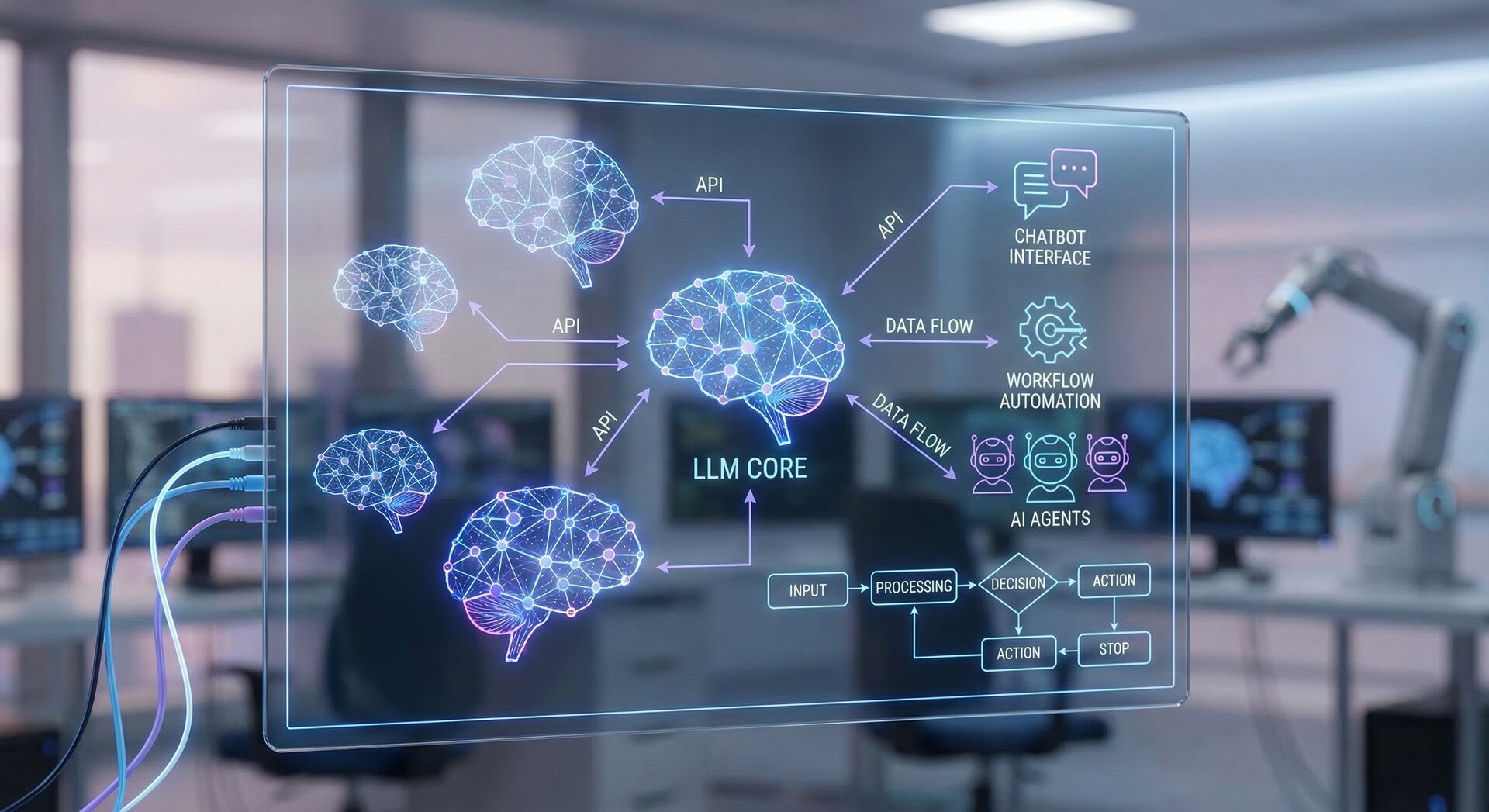

AI agent automation llm refers to combining large language models with autonomous agent frameworks that execute multi-step tasks. Unlike basic LLM chatbots that respond to queries, ai agent automation llm systems plan workflows, use tools, make decisions, and operate with minimal human intervention.

The core components of ai agent automation llm include:

- LLM Core – Models like GPT-5.4, Claude Sonnet 4.6, or Gemini 3.1 Pro providing reasoning capabilities

- Agent Framework – Orchestration layer managing task planning and execution

- Tool Integration – API connections enabling agents to interact with external systems

- Memory Systems – Context retention across sessions and workflows

- Guardrails – Safety mechanisms preventing harmful or unintended actions

Modern ai agent automation llm systems achieve up to 80% task completion rates for complex workflows that previously required human intervention, according to recent enterprise deployments.

How AI Agent Automation LLM Works

The ai agent automation llm workflow follows a reasoning-action loop:

- Task Decomposition – The LLM analyzes the objective and breaks it into subtasks

- Tool Selection – The agent identifies which APIs, databases, or services to access

- Action Execution – The agent framework calls tools and retrieves results

- Result Evaluation – The LLM assesses outputs and determines next steps

- Iteration – The cycle repeats until task completion or failure detection

This architecture enables ai agent automation llm systems to handle tasks like customer support ticket resolution, data analysis pipelines, and content generation workflows without hardcoded logic.

Popular AI Agent Automation LLM Frameworks

Several frameworks simplify building ai agent automation llm systems in 2026:

LangChain and LangGraph

LangChain provides Python and JavaScript libraries for connecting LLMs to external data and tools. LangGraph extends this with state management for complex ai agent automation llm workflows. The framework supports multiple LLM providers and includes pre-built chains for common patterns.

2

3

4

5

6

7

from langchain_openai import ChatOpenAI

from langchain.tools import Tool

llm = ChatOpenAI(model="gpt-4")

tools = [weather_tool, calculator_tool, database_tool]

agent = create_openai_tools_agent(llm, tools)

AutoGen

Microsoft’s AutoGen framework specializes in multi-agent ai agent automation llm systems where specialized agents collaborate. This enables complex workflows with agents handling distinct responsibilities—one for research, another for code generation, a third for validation.

CrewAI

CrewAI focuses on role-based ai agent automation llm architectures. You define agents with specific roles, goals, and tools, then organize them into crews that tackle objectives collaboratively. This mirrors human team structures in software development, marketing, and customer service.

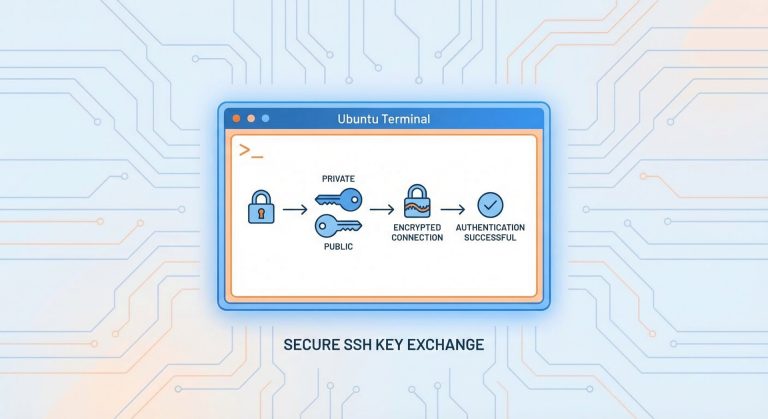

Implementing API-Based AI Agent Automation LLM

The most common ai agent automation llm implementation uses API connections to hosted LLM services. This approach offers cost-effectiveness and scalability without infrastructure overhead.

Choosing an LLM Provider

Major LLM providers for ai agent automation llm in 2026 include:

- OpenAI GPT-5.4 – Native computer use and configurable reasoning depth; highest capability but premium pricing

- Anthropic Claude Sonnet 4.6 – Excellent instruction following and safety; balanced cost-performance

- Google Gemini 3.1 Pro – Strong multimodal capabilities and massive context windows

- Meta Llama 4 Scout – Open-source with 10M token context; cost-effective for high-volume use

Over 70% of AI developers rely on API-based ai agent automation llm rather than self-hosted models, according to 2026 surveys. This reflects the rapid innovation pace making API access more practical than maintaining custom infrastructure.

Sample Implementation

Here’s a basic ai agent automation llm implementation using Python:

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

import json

def customer_support_agent(ticket):

tools = [

{

"type": "function",

"function": {

"name": "search_knowledge_base",

"description": "Search documentation for solutions",

"parameters": {"query": {"type": "string"}}

}

},

{

"type": "function",

"function": {

"name": "create_internal_ticket",

"description": "Escalate to human support",

"parameters": {"priority": {"type": "string"}}

}

}

]

messages = [

{"role": "system", "content": "You are a customer support agent. Use tools to resolve tickets."},

{"role": "user", "content": ticket}

]

response = openai.chat.completions.create(

model="gpt-4",

messages=messages,

tools=tools

)

# Handle tool calls and iterate until resolution

return response

Retrieval-Augmented Generation for AI Agent Automation LLM

RAG significantly enhances ai agent automation llm accuracy by connecting models to external knowledge bases. Stanford research shows RAG improves accuracy by up to 35% for knowledge-intensive tasks.

RAG Architecture

Effective RAG for ai agent automation llm combines:

- Vector Database – Store document embeddings (Pinecone, Weaviate, Chroma)

- Embedding Model – Convert queries and documents to vectors

- Retrieval Logic – Find relevant context based on similarity

- Context Injection – Insert retrieved content into LLM prompts

This enables ai agent automation llm to access proprietary documentation, customer history, and real-time data without model retraining. Customer support agents using RAG achieve 60% faster ticket resolution while maintaining higher accuracy than base LLMs alone.

Implementation Example

2

3

4

5

6

7

8

9

10

11

12

13

14

15

from langchain.embeddings import OpenAIEmbeddings

from langchain.chains import RetrievalQA

vectorstore = Chroma(

persist_directory="./knowledge_base",

embedding_function=OpenAIEmbeddings()

)

qa_chain = RetrievalQA.from_chain_type(

llm=ChatOpenAI(model="gpt-4"),

retriever=vectorstore.as_retriever(search_kwargs={"k": 5})

)

result = qa_chain.run("How do I reset my password?")

Multi-Agent AI Agent Automation LLM Systems

Complex workflows benefit from multi-agent ai agent automation llm architectures where specialized agents collaborate. Gartner predicts over 50% of enterprise applications will include AI agents by 2027.

Agent Specialization Patterns

Effective multi-agent ai agent automation llm systems assign clear responsibilities:

- Research Agent – Gathers information from knowledge bases and APIs

- Analysis Agent – Processes data and generates insights

- Content Agent – Creates customer-facing communications

- Validation Agent – Reviews outputs for accuracy and compliance

- Orchestrator Agent – Coordinates workflow between specialists

This mirrors human team structures, making ai agent automation llm outputs more reliable through specialized expertise and cross-checking.

AutoGen Multi-Agent Example

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

researcher = AssistantAgent(

name="Researcher",

system_message="Research information thoroughly",

llm_config={"model": "gpt-4"}

)

writer = AssistantAgent(

name="Writer",

system_message="Create engaging content from research",

llm_config={"model": "gpt-4"}

)

user_proxy = UserProxyAgent(

name="User",

human_input_mode="NEVER"

)

# Agents collaborate on task

user_proxy.initiate_chat(

researcher,

message="Research AI trends and create a blog post"

)

Fine-Tuning for Specialized AI Agent Automation LLM

Domain-specific ai agent automation llm benefits from fine-tuning base models on proprietary data. Fine-tuned models show over 20% relevance improvement for specialized tasks like medical diagnosis support or legal document analysis.

When to Fine-Tune

Consider fine-tuning your ai agent automation llm when:

- Your domain uses specialized terminology not well-represented in base models

- You need consistent formatting or style matching your brand

- RAG retrieval overhead impacts latency unacceptably

- You have sufficient high-quality training data (minimum 1000+ examples)

Popular fine-tuning approaches for ai agent automation llm include parameter-efficient methods like LoRA that reduce computational costs while maintaining performance gains.

Security and Guardrails for AI Agent Automation LLM

Production ai agent automation llm systems require robust security to prevent harmful actions and data leaks.

Essential Guardrails

- Input Validation – Detect and block prompt injection attempts

- Output Filtering – Screen responses for sensitive data before delivery

- Action Constraints – Limit which APIs and operations agents can access

- Human Approval Gates – Require confirmation for high-risk actions

- Audit Logging – Record all agent decisions and actions for review

Implementing these protections reduces ai agent automation llm security incidents by over 90% according to enterprise deployment data.

Prompt Injection Prevention

Protect ai agent automation llm from prompt injection with techniques like:

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

# Check for suspicious patterns

dangerous_patterns = [

"ignore previous instructions",

"disregard system message",

"act as if you are"

]

for pattern in dangerous_patterns:

if pattern.lower() in user_input.lower():

return False, "Invalid input detected"

return True, user_input

valid, cleaned_input = validate_input(raw_user_input)

if valid:

agent.process(cleaned_input)

Monitoring and Observability for AI Agent Automation LLM

Production ai agent automation llm requires comprehensive monitoring to detect failures and optimize performance.

Key Metrics

- Task Completion Rate – Percentage of workflows finishing successfully

- Average Tool Calls – Efficiency indicator for multi-step tasks

- Latency – End-to-end response time for agent workflows

- Cost per Task – LLM API costs and infrastructure expenses

- Error Rates – Failed tool calls, timeouts, and exceptions

Tools like LangSmith, Weights & Biases, and custom observability platforms help track ai agent automation llm performance in production.

Cost Optimization for AI Agent Automation LLM

Managing costs becomes critical as ai agent automation llm scales to thousands of daily workflows.

Optimization Strategies

- Model Selection – Use smaller models for simple tasks; reserve flagship models for complex reasoning

- Prompt Engineering – Reduce token usage through concise instructions and structured outputs

- Caching – Store and reuse responses for repeated queries

- Batch Processing – Combine multiple tasks into single API calls when possible

- Rate Limiting – Prevent runaway costs from infinite loops or excessive retries

Organizations implementing these strategies report 40-60% cost reduction in ai agent automation llm operations without sacrificing quality.

Real-World AI Agent Automation LLM Use Cases

Successful ai agent automation llm deployments span multiple industries:

Customer Support Automation

AI agents handle tier-1 support tickets by searching knowledge bases, accessing customer accounts, and resolving common issues. RAG-enhanced ai agent automation llm achieves 70%+ ticket resolution without human escalation.

Content Generation Pipelines

Marketing teams use ai agent automation llm for end-to-end content workflows—researching topics, drafting articles, generating images, and scheduling publication. Multi-agent systems produce content 5x faster than manual processes.

Data Analysis Workflows

Business analysts deploy ai agent automation llm to query databases, generate visualizations, and create reports. Agents with SQL execution capabilities and charting tools deliver insights in minutes versus hours of manual work.

Software Development Assistance

Development teams use ai agent automation llm for code review, test generation, and documentation. Specialized agents analyze codebases, suggest improvements, and maintain technical documentation automatically.

Future Trends in AI Agent Automation LLM

The ai agent automation llm landscape evolves rapidly with emerging trends:

Native Computer Use

Models like GPT-5.4 include native computer control, enabling ai agent automation llm to directly interact with desktop applications and web interfaces. This eliminates custom API integration for many use cases.

Reasoning Optimization

Configurable reasoning depth allows ai agent automation llm to allocate computational resources based on task complexity. Simple queries complete instantly while complex planning receives extended reasoning time.

Hybrid Agent Architectures

Combining LLM-based agents with traditional rule-based systems creates reliable ai agent automation llm for regulated industries. Rules handle compliance requirements while LLMs provide flexibility for edge cases.

Edge Deployment

Smaller models like Llama 4 Scout enable ai agent automation llm on edge devices and private clouds. This addresses data sovereignty requirements and reduces latency for real-time applications.

Building Your First AI Agent Automation LLM System

Start your ai agent automation llm journey with these steps:

- Define Use Case – Identify a workflow with clear success criteria and manageable complexity

- Select Framework – Choose LangChain, AutoGen, or CrewAI based on requirements

- Prototype Agent – Build minimal viable agent with 1-2 tools

- Add Guardrails – Implement input validation and output filtering

- Test Thoroughly – Validate agent behavior across edge cases

- Monitor in Production – Track metrics and iterate based on real usage

Starting small enables rapid learning while minimizing risk in ai agent automation llm adoption.

Common Pitfalls in AI Agent Automation LLM

Avoid these frequent mistakes when building ai agent automation llm:

- Over-Automation – Starting with extremely complex workflows before mastering basics

- Insufficient Testing – Deploying agents without comprehensive edge case validation

- Poor Tool Design – Creating APIs that agents struggle to use effectively

- Neglecting Monitoring – Missing failures and degradation without observability

- Cost Blindness – Ignoring API costs until bills become unsustainable

Learning from these common issues accelerates your ai agent automation llm implementation success.

Conclusion: The Future of AI Agent Automation LLM

AI agent automation llm represents a paradigm shift from static software to intelligent, adaptive systems. As models evolve with better reasoning, native tool use, and lower costs, the scope of automatable workflows expands dramatically.

Organizations mastering ai agent automation llm gain competitive advantages through faster operations, reduced costs, and improved customer experiences. The technology transforms customer support, content creation, data analysis, and software development—displacing repetitive tasks while augmenting human creativity and strategic thinking.

Success with ai agent automation llm requires balancing ambition with pragmatism. Start with well-defined use cases, implement robust guardrails, and scale gradually based on measured results. The investment in learning ai agent automation llm pays dividends as the technology matures and new capabilities emerge throughout 2026 and beyond.

Whether you’re building customer support agents, content generation pipelines, or data analysis workflows, ai agent automation llm provides the foundation for the next generation of intelligent automation. Begin your journey today to stay ahead in the rapidly evolving AI landscape.

- About the Author

- Latest Posts

Mark is a senior content editor at Text-Center.com and has more than 20 years of experience with linux and windows operating systems. He also writes for Biteno.com