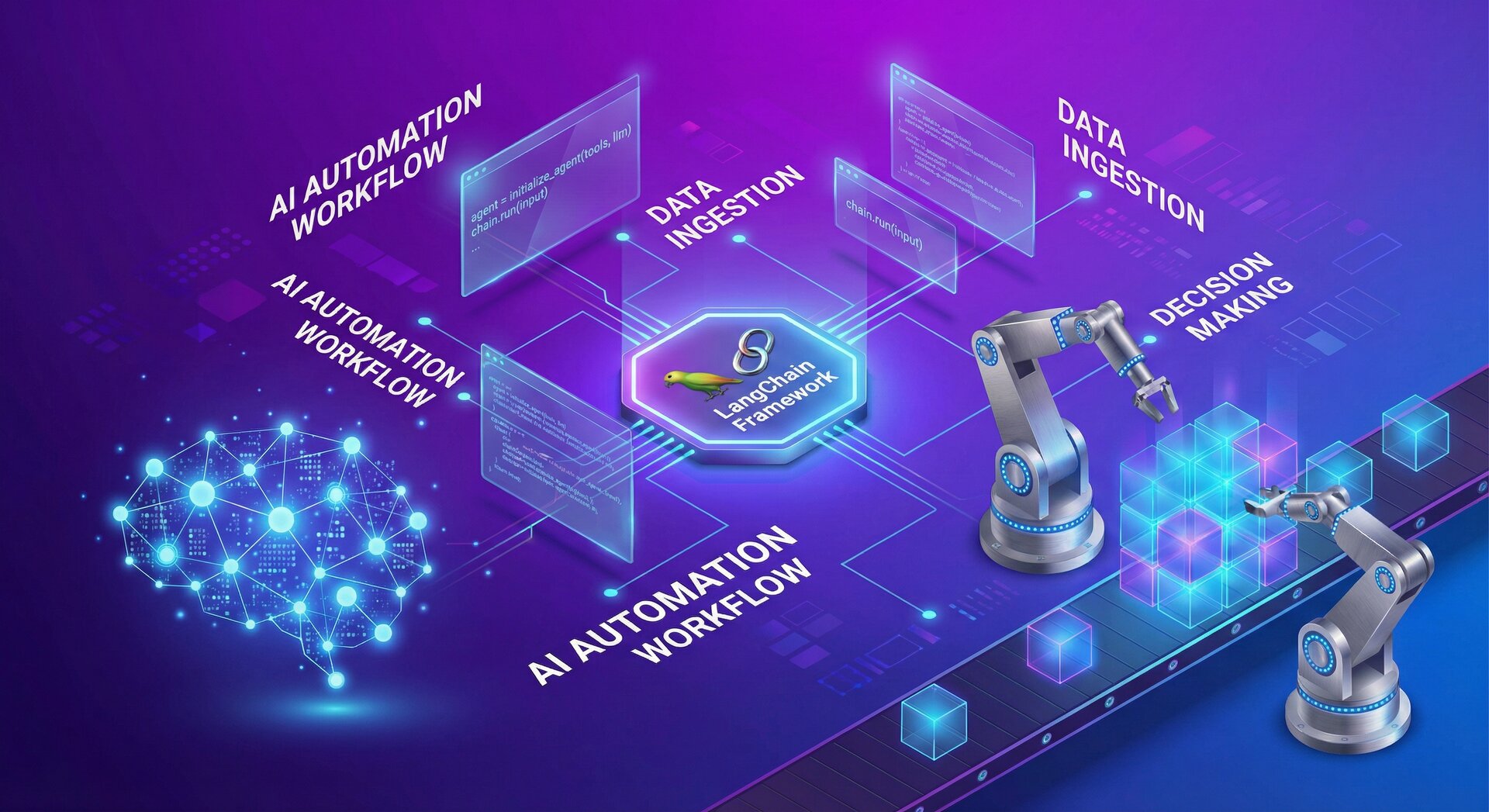

How to Build LLM Automation with LangChain in 2026

llm automation langchain is revolutionizing how businesses build AI-powered applications in 2026. This comprehensive guide shows you how to implement llm automation langchain to create intelligent agents, RAG systems and autonomous AI workflows. Whether you are a developer or data scientist, mastering llm automation langchain will give you a competitive edge in the rapidly evolving AI landscape.

Building llm automation langchain systems has become essential for organizations looking to harness the power of large language models. LangChain provides a robust framework for orchestrating complex AI workflows, from simple prompt chaining to sophisticated autonomous agents. This tutorial walks you through every aspect of implementing llm automation langchain, from environment setup to production deployment.

What is LangChain and Why Use It for LLM Automation?

llm automation langchain

LangChain has emerged as the dominant framework for LLM automation, abstracting the complexity of working with large language models while providing flexible building blocks for sophisticated AI systems. When you implement LLM automation with LangChain, you gain access to modular components that handle prompt management, memory systems, tool integration, and agent orchestration.

Unlike direct API calls to GPT-4, Claude, or other LLMs, LangChain enables you to:

- Chain multiple LLM calls with context preservation between steps

- Integrate external data sources through retrieval-augmented generation (RAG)

- Build autonomous agents that use tools and make decisions

- Implement memory systems for conversational continuity

- Switch between different LLM providers without code rewrites

The framework handles the orchestration complexity, letting you focus on business logic rather than low-level prompt engineering and state management.

Core Concepts for LLM Automation with LangChain

llm automation langchain

Chains: Sequential workflows that pass outputs from one LLM call to the next. Simple chains handle linear processing, while more complex chains implement branching logic based on intermediate results.

Agents: Autonomous systems that select tools and actions dynamically based on user input. Agents represent the highest level of LLM automation, making decisions about which operations to perform and in what sequence.

Memory: Systems for maintaining conversation history and context across multiple interactions. Buffer memory stores recent messages, while vector memory enables semantic search through historical interactions.

Tools: External functions that agents can invoke—database queries, API calls, web searches, calculations. Tools extend LLM capabilities beyond text generation.

Retrieval: Integration with vector databases for RAG implementations. This allows LLMs to access domain-specific knowledge without retraining.

Setting Up Your LangChain Development Environment

llm automation langchain

Before building LLM automation with LangChain, establish a proper development environment. Start with Python 3.9+ and install core dependencies:

2

3

pip install chromadb sentence-transformers

pip install python-dotenv

For this tutorial, we’ll use OpenAI’s GPT-4 as our LLM provider, but LangChain supports numerous alternatives including Anthropic Claude, Google PaLM, and open-source models via Hugging Face. Store your API credentials securely:

2

OPENAI_API_KEY=your_api_key_here

Never hardcode API keys in source code. Use environment variables or secrets management systems for production deployments. Learn more about Python security best practices for handling sensitive credentials.

Building Your First LLM Automation Chain

llm automation langchain

Let’s create a simple but practical LLM automation with LangChain—an automated email response generator. This chain analyzes incoming emails and drafts contextually appropriate responses:

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

from langchain.prompts import ChatPromptTemplate

from langchain.chains import LLMChain

import os

from dotenv import load_dotenv

load_dotenv()

llm = ChatOpenAI(model="gpt-4", temperature=0.7)

classification_prompt = ChatPromptTemplate.from_messages([

("system", "You are an email classifier. Categorize emails as: urgent, inquiry, complaint, or general."),

("human", "Email content: {email_content}")

])

response_prompt = ChatPromptTemplate.from_messages([

("system", "You are a professional customer service representative. Write a helpful response to this {category} email."),

("human", "Email content: {email_content}")

])

classification_chain = LLMChain(llm=llm, prompt=classification_prompt)

response_chain = LLMChain(llm=llm, prompt=response_prompt)

def automate_email_response(email_content):

category = classification_chain.run(email_content=email_content)

response = response_chain.run(

category=category,

email_content=email_content

)

return {"category": category, "response": response}

# Example usage

email = "I've been waiting 3 days for my refund. This is unacceptable!"

result = automate_email_response(email)

print(f"Category: {result['category']}")

print(f"Response: {result['response']}")

This implementation demonstrates chaining—the classification result informs the response generation, creating context-aware automation.

Implementing Retrieval-Augmented Generation (RAG)

llm automation langchain

RAG represents a paradigm shift in LLM automation with LangChain, allowing models to access external knowledge bases without expensive fine-tuning. Here’s how to build a document Q&A system:

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.embeddings import OpenAIEmbeddings

from langchain.vectorstores import Chroma

from langchain.chains import RetrievalQA

# Load and process documents

loader = DirectoryLoader('./docs/', glob="**/*.txt", loader_cls=TextLoader)

documents = loader.load()

# Split into chunks for embedding

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=1000,

chunk_overlap=200

)

texts = text_splitter.split_documents(documents)

# Create vector store

embeddings = OpenAIEmbeddings()

vectorstore = Chroma.from_documents(

documents=texts,

embedding=embeddings,

persist_directory="./chroma_db"

)

# Build retrieval chain

qa_chain = RetrievalQA.from_chain_type(

llm=ChatOpenAI(model="gpt-4"),

chain_type="stuff",

retriever=vectorstore.as_retriever(search_kwargs={"k": 3})

)

# Query the system

query = "What are the main features of our product?"

answer = qa_chain.run(query)

print(answer)

This RAG implementation chunks documents, generates embeddings, stores them in a vector database, and retrieves relevant context for each query. The LLM only sees pertinent information, improving accuracy while reducing hallucinations.

Creating Autonomous Agents with LangChain

llm automation langchain

Agents represent the pinnacle of LLM automation with LangChain—systems that autonomously select and execute tools based on reasoning. Here’s a practical agent that can search the web, perform calculations, and access databases:

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

from langchain.utilities import SerpAPIWrapper, SQLDatabase

from langchain.chains import LLMMathChain

llm = ChatOpenAI(model="gpt-4", temperature=0)

# Define tools the agent can use

search = SerpAPIWrapper()

llm_math = LLMMathChain.from_llm(llm)

db = SQLDatabase.from_uri("sqlite:///company_data.db")

tools = [

Tool(

name="Search",

func=search.run,

description="Useful for finding current information on the web"

),

Tool(

name="Calculator",

func=llm_math.run,

description="Useful for mathematical calculations"

),

Tool(

name="Database",

func=db.run,

description="Useful for querying company records"

)

]

# Initialize agent

agent = initialize_agent(

tools,

llm,

agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION,

verbose=True,

max_iterations=5

)

# Agent autonomously decides which tools to use

result = agent.run(

"What's the current Bitcoin price and how many employees do we have in the Sales department?"

)

print(result)

The agent analyzes the query, recognizes it requires web search for Bitcoin prices and database access for employee counts, executes both tools, and synthesizes a coherent response. This autonomous decision-making is the hallmark of advanced LLM automation with LangChain.

Implementing Memory Systems for Conversational Agents

llm automation langchain

Stateless LLM calls forget previous interactions. Memory systems enable conversational continuity:

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

from langchain.chains import ConversationChain

# Buffer memory stores recent messages

memory = ConversationBufferMemory()

conversation = ConversationChain(

llm=ChatOpenAI(model="gpt-4"),

memory=memory,

verbose=True

)

conversation.predict(input="Hi, I'm interested in your Python courses.")

conversation.predict(input="What's the duration?")

conversation.predict(input="Do you offer certificates?") # Agent remembers we're discussing Python courses

# Summary memory for long conversations

summary_memory = ConversationSummaryMemory(

llm=ChatOpenAI(model="gpt-4"),

max_token_limit=100

)

# Automatically summarizes conversation history to fit context windows

Buffer memory works for short interactions, while summary memory compresses long conversations into manageable context, preventing token limit issues.

Production Deployment Considerations

llm automation langchain

Moving from prototype to production LLM automation with LangChain requires attention to several critical factors:

Error Handling and Retries:

2

3

4

5

6

7

8

9

10

from tenacity import retry, stop_after_attempt, wait_exponential

@retry(stop=stop_after_attempt(3), wait=wait_exponential(multiplier=1, min=4, max=10))

def call_llm_with_retry(chain, input_data):

with get_openai_callback() as cb:

result = chain.run(input_data)

print(f"Tokens used: {cb.total_tokens}")

print(f"Cost: ${cb.total_cost}")

return result

Prompt Versioning: Store prompts in configuration files or databases to enable A/B testing and rollback capabilities without code deployments.

Observability: Implement logging, tracing, and monitoring. LangChain integrates with platforms like LangSmith for production observability.

Cost Management: Monitor token usage and implement caching strategies. Vector database queries with cached embeddings dramatically reduce API costs.

Security: Never pass unsanitized user input directly to LLMs. Implement input validation, output filtering, and rate limiting to prevent abuse.

Advanced LLM Automation Patterns

llm automation langchain

Multi-Agent Systems: Coordinate multiple specialized agents for complex workflows. One agent handles planning, another executes tasks, a third validates outputs.

Human-in-the-Loop: Build approval workflows where critical agent decisions require human confirmation before execution.

Fine-Tuning Integration: Combine LangChain orchestration with fine-tuned models for domain-specific performance. Use general models for reasoning and specialized models for generation.

Streaming Responses: Implement token streaming for better user experience in conversational interfaces:

2

3

4

5

6

7

8

9

10

llm = ChatOpenAI(

model="gpt-4",

streaming=True,

callbacks=[StreamingStdOutCallbackHandler()]

)

chain = LLMChain(llm=llm, prompt=prompt)

chain.run(input_data) # Tokens stream as they're generated

Testing and Debugging LangChain Automation

llm automation langchain

Reliable LLM automation with LangChain requires thorough testing strategies:

Unit Tests for Chains:

2

3

4

5

6

7

8

9

10

11

from unittest.mock import Mock

def test_classification_chain():

mock_llm = Mock()

mock_llm.run.return_value = "urgent"

chain = LLMChain(llm=mock_llm, prompt=classification_prompt)

result = chain.run(email_content="Emergency: System down!")

assert result == "urgent"

Integration Tests with Real APIs: Test against actual LLM providers in staging environments with test data.

Evaluation Metrics: Implement systematic evaluation using test datasets. Measure accuracy, relevance, and consistency across prompt variations.

For debugging, enable verbose mode and examine intermediate outputs:

LangChain Alternatives and When to Use Them

llm automation langchain

While LangChain dominates, alternative frameworks offer advantages in specific scenarios:

- LlamaIndex: Optimized specifically for RAG applications with superior indexing strategies

- Semantic Kernel: Microsoft’s framework, well-integrated with Azure services

- Haystack: Production-ready search and Q&A with extensive NLP pipeline support

- AutoGPT/BabyAGI: Experimental autonomous agent frameworks for research

Choose LangChain for general-purpose LLM automation, LlamaIndex for document-heavy applications, and Semantic Kernel when deeply invested in Microsoft ecosystems. Learn more about Python framework selection criteria.

Real-World LLM Automation Use Cases

llm automation langchain

Organizations implementing LLM automation with LangChain across industries:

Customer Support: Automated ticket triage, response generation, and escalation routing reduce response times by 60-80%.

Content Generation: Marketing teams automate blog outlines, social media content, and email campaigns while maintaining brand voice consistency.

Code Assistance: Development teams build internal code review agents, documentation generators, and debugging assistants.

Legal Document Analysis: Law firms automate contract review, clause extraction, and compliance checking across thousands of documents.

Financial Research: Investment firms automate earnings call analysis, news sentiment tracking, and report generation.

Explore industry-specific AI automation case studies for implementation insights.

Troubleshooting Common LangChain Issues

llm automation langchain

Token Limit Exceeded: Implement chunking strategies or switch to models with larger context windows. GPT-4 Turbo supports 128K tokens.

Slow Response Times: Cache embeddings, implement parallel processing for independent operations, consider smaller models for simple tasks.

Inconsistent Outputs: Lower temperature settings, add output parsers for structured responses, implement validation layers.

High API Costs: Implement aggressive caching, use smaller models where appropriate, batch operations, optimize prompt length.

Future Trends in LLM Automation

llm automation langchain

As we progress through 2026, several trends are reshaping LLM automation with LangChain:

- Multimodal Agents: Integration of vision, audio, and text processing in unified automation workflows

- Local LLM Deployment: Running open-source models locally for privacy-sensitive applications

- Agent Specialization: Fine-tuned agents for narrow domains outperforming general-purpose models

- Guardrails and Safety: Built-in content filtering and ethical constraint systems becoming standard

- Hybrid Human-AI Workflows: Seamless collaboration patterns where AI augments rather than replaces human workers

Conclusion: Mastering LLM Automation with LangChain

llm automation langchain

Building effective LLM automation with LangChain requires understanding both the framework’s capabilities and the underlying principles of large language models. From simple chains to complex autonomous agents, LangChain provides the abstractions necessary to create production-ready AI systems without drowning in implementation details.

Start with basic chains to understand data flow and prompt engineering. Progress to RAG implementations for knowledge-augmented applications. Finally, explore agents for tasks requiring autonomous decision-making. Each level builds on previous concepts while introducing new capabilities.

Remember that LLM automation is an iterative process. Begin with narrow use cases, measure results, refine prompts, and gradually expand scope. Implement proper testing, monitoring, and error handling from the start—these investments pay dividends as systems scale.

The landscape of LLM automation with LangChain continues evolving rapidly. Stay current with framework updates, experiment with new models, and actively participate in the developer community. The techniques covered in this guide provide a solid foundation for building sophisticated AI automation that delivers tangible business value throughout 2026 and beyond.

- About the Author

- Latest Posts

Mark is a senior content editor at Text-Center.com and has more than 20 years of experience with linux and windows operating systems. He also writes for Biteno.com